|

THE JUNCTION GRAMMAR MODEL OF LANGUAGE

OVERVIEW AND COMPARISON

BrighamYoung University

Translation Sciences Institute

(Revision of 1977 paper)

I. GENERAL ORIENTATION

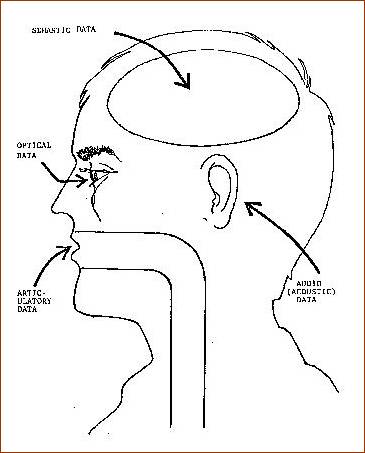

Junction Grammar (JG) does not subscribe to the doctrine that the study of syntax can be constructively pursued independently of semantics, phonology, etc. but adopts instead a systems approach that requires (and seeks to explicate) the interaction of the subcomponents of language. Seen from this perspective, linguistic description must circumscribe and interrelate all data types presumed to participate in the synthesis, analysis, and interpretation of speech. Consequently, while the semantic, articulatory, and audio components of JG are envisioned as processing data structured specifically for them, grammars of a new kind, which we shall refer to as coding grammars, are introduced to mediate between data types (see Figure 1). I shall elaborate further on this point directly.

Figure 1. Multiple data types

In Junction Grammar, data types may not be intermingled. To do so would, from the JG point of view, be tantamount to feeding instructions for both the heart and the diaphragm to the diaphragm. It is assumed that semantic instructions are not (CANNOT BE, actually) executed by a vocal tract, nor are articulatory instructions suitable for execution by a semantic tract. This means, in effect, that a ‘deep’ structure is not ‘transformed’ (in the Chomskian sense of the word) into a ‘surface’ structure without reference to any anatomical appointments whatsoever but, rather, that underlying semantic components invoke and regulate the coding grammars that synthesize the articulatory instructions, orthographic instructions, and gestural instructions required to signal overtly what is ‘deeply’ present.

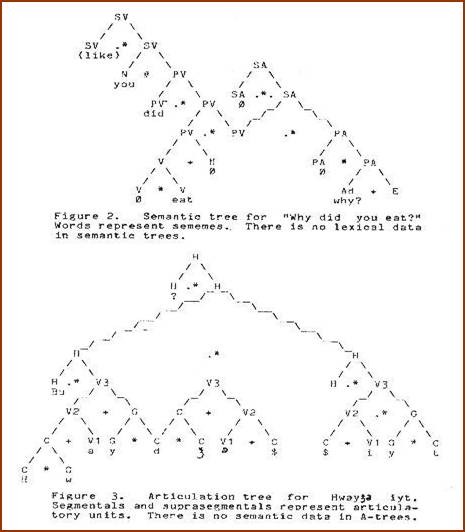

This explains why, in JG semantic representations, there are no lexical items, since these are considered to be either orthographic or articulatory entities having place elsewhere in the system. Similarly, there is no semantic information in phonological representations, since these are a different data type. However, the various data types are reciprocally symbolic as well as isomorphic – the latter to the extent necessary to enable reliable translation (not transformation) between types.

To field a metaphor, the various components of the model may be viewed as specialized "black boxes,” each designed to manipulate data of a particular type, while the coding grammars may be viewed as interfaces between the boxes.

We repeat: Coding grammars code - they do not modify the base from which they code. Thus, transformation as effected by standard T-rules (structural-description/structural-change) could only occur in JG within a box, i.e. within a data type, but never between types. Indeed, were this to occur, the transfer of information between types would fail.

For example, the translation model based on JG which serves as the basis for computer-assisted translation research at BYU receives orthographic English as input data. A decoding module (grammar) designed to analyze this kind of data, recodes the input as junction trees, which are semantic data. This data is in turn passed to language-specific transfer modules which transform the trees (this time, in the Chomskian sense of the word) as necessary to satisfy the output conditions imposed by the lexical coding grammars of Spanish, French, German, Portuguese, and Chinese. The customized semantic trees are then passed to the appropriate lexical coding modules, which in turn synthesize translations for the target languages named.

It should be noted that while the JG translation model uses transformation as a means of mapping semantic data from language to language, T-rules are not used at all within a single language. This does not mean that a junction grammar handles only a restricted subset of sentences within a single language. It simply means that many of the functions performed by T-rules in a transformational grammar are taken over by the coding grammars. Meanwhile, the residue become unnecessary as a consequence of JG’s more powerful system of base rules, which, for their part, generate a much broader variety of structures than P-rules do, making it unnecessary to obtain, for example, passives, via transformation.

II. LEXICAL CODING

In order to clarify how certain functions handled by transformations in Transformational Generative Grammar (TGG) are taken over by coding grammars in Junction Grammar (JG), let us consider for a moment what is required to translate semantic data into lexical data. Five steps, each applying its own set of rules, are required, which, in the composite, constitute a lexical coding grammar. The rule types are:

1. Lexical Ordering Rules;

2. Lexical Hiatus Rules;

3. Lexical Matching Rules;

4. Lexical Agreement Rules; and

5. Lexical Phrasing Rules.

All of these rules are language-specific, which means, in effect, that each language has its own version of the rules.

Lexical Ordering Rules

Word order, of course, is not pertinent to semantic trees but it is for the lexical strings corresponding to them. It is therefore necessary to define the ordering conventions of each language. This is accomplished in Junction Grammar by lexical ordering rules (LO-rules). These rules define the order in which the sememes (semantic units) of semantic junction trees (J-trees, as they are customarily referred to) are translated into their corresponding lexemes. In doing so, they also specify the order in which subsequent lexical rule components will apply to the nodes of the tree. This approach to ordering has been implemented in the computer-assisted translation system previously referred to and has proven to be very powerful. Whether a particular language favors Subject-Verb or Object-Verb ordering, for example, makes no difference – both can be obtained from the same J-tree.

An analogy may help to demonstrate how this concept of ordering differs from that used in a transformational grammar: In TGG there is a single set of constituents; these are reordered when certain conditions arise. In JG there are two sets of objects, one semantic and the other lexical. The semantic objects are not ordered, but their properties, together with those of corresponding lexical objects, determine the chronology of the lexical objects. Thus in JG there is no need for rules which alter the order of objects within a single representation.

Lexical Hiatus Rules

Lexical hiatus rules (LH-rules) apply after lexical ordering rules have applied. Hiatus rules, rather than deleting semantic constituents, suppress lexical coding if certain conditions exist in the semantic data. Thus, in English, the subject constituent of an imperative (command) might be exempted from lexical coding by an LH-rule.

Lexical Matching Rules

Lexical matching rules (LM-rules) establish the symbolic link which exists between sememe and lexeme. In effect, if a given sememe SEM(1) is present in the semantic data when its node is evaluated by the matching rules of the lexical coding grammar of a particular language, LEX(1) is introduced into the corresponding lexical data. This formalizes what is commonly referred to as the symbolic relation between a linguistic sign and what it designates.

It is obvious, of course, that if lexical matching rules are to simulate what actually happens in natural language, these rules must have access not only to the denotative value of sememes but also to other information that determines choice of vocabulary (semantic environment; lexical environment; discourse environment; emotional orientation, etc.). Thus, while the simplest lexical matching rule may be regarded as a non context-sensitive link between sememe and lexeme, the matching process is obviously much more complex than that in most cases.

Lexical matching rules have the option of linking sememes either with orthographic lexemes, or with articulatory lexemes, depending on whether the desired output is printed or spoken language. Should one wish to code sememes as body language, then it would be necessary to devise a system for representing motor instructions for appropriate body parts – matching rules would then be formulated to link sememes with the packets of corresponding motor instructions.

Lexical Agreement Rules

Lexical agreement rules (LA-rules) refine the lexemes introduced by the lexical matching rules by inserting affixes in their proper places, or otherwise adjusting the base form. Lexical agreement rules are, of course, sensitive to whatever semantic information governs the use of affixes in a particular language. In practice, lexical matching rules and lexical agreement rules are formulated to apply in concert with each other. First the matching rule introduces a base form; then the agreement rule checks its semantic and lexical environment to determine whether affixation or other adjustments are necessary to refine it.

Lexical phrasing rules group articulatory lexemes into prosodic phrases. These rules are also sensitive to the semantic environment of the sememes to which they are linked, since they account for certain suprasegmental phenomena which are governed by the meaning of what is being said. (We refer to these rules as graphological rules when they apply to orthographic lexical strings.)

Let us digress momentarily to clarify the nature of articulatory lexemes in junction grammar. They are, in effect, phonological representations that may be viewed as instructions to a vocal tract analogue. However, these phonological representations are not the kind used in standard generative phonology, being junction trees themselves, but of a different type. The information they contain is strictly phonological, depicting how phonemes are joined to form syllables, how syllables are joined to form words, how words are joined to form phrases, and how phrases are joined to form the larger articulatory units we call sentences. Thus, while semantic junction trees depict the relationship of semantic constituents, articulatory junction trees depict the relationship of articulatory constituents.

May I again emphasize that semantic trees and articulation trees, while being entirely separate from each other, do bear a symbolic relation to each other (compare Figures 2 and 3).

Thus, while articulation trees contain no semantic information at all, they do for the most part signal the specifics of the semantic trees from which they were coded by using the familiar lexical devices (word order concord, inflection, conjugation, etc.). When they fail do so, of course, ambiguity or puzzlement results.

L-Rule Synopsis

To summarize, let us consider what would transpire if the operation of the lexical coding rules of English were displayed visually via a configuration of two video terminals connected to the computer that houses the BYU computer-assisted translation system previously referred to.

On one screen the semantic junction tree to be lexically coded would be presented; on the other the lexical representation corresponding to the tree would be displayed. Initially, i.e. before the application of any lexical coding rules, the second video screen would be blank, while the first would display the semantic junction tree to be processed. The various lexical coding rules would apply in the order they have been discussed (ordering, hiatus, matching, agreement, and phrasing). As they applied, you would observe the following:

1. First, as the lexical ordering rules applied; you would see an integer appear beside every node in the semantic junction tree. These integers indicate the order in which the nodes will be interpreted by the other coding rules.

- 2. Next, the lexical hiatus rules would apply. Some of the nodes in the tree will turn red, indicating that the lexical hiatus rules have exempted them from further processing.

-

- 3. Next, lexical matching rules would apply. Now, for the first time, words begin to appear on the second terminal. These are the base forms introduced (in their proper order) by the lexical matching rules.

-

- 4. Next, we see these forms supplemented by the appropriate prefixes, suffixes, concord patterns, and so on. It is apparent at this point that the lexical agreement rules have applied.

-

- 5. Finally we would see periods, commas, capital letters etc. appearing. This, of course, is the culmination of the lexical coding process and corresponds to the application of the phrasing rules.

We observe that the semantic tree on the first display, while augmented with ordering and hiatus markers, has not otherwise been altered or transformed. The lexical string on the second display bears a coding relation to the semantic tree, not a transformational relation. The coding grammar, in conjunction with more powerful base rules, has supplanted the customary component of T-rules.

III. THE RATIONALE OF JUNCTION RULES

We now turn to a discussion of the rationale for semantic J-rules. Bear in mind that, since there are no T-rules to enhance the generative power of the junction rules, the junction rule schemata must be sufficiently powerful to generate the entire spectrum of natural language structures without recourse to the transformation of structure. The assumption that it is possible to devise such a set of production rule stems from two observations:

Ø First, many functions customarily accomplished via T-rules are taken over in a natural way by coding rules (ordering, hiatus, lexical insertion, etc.);

Ø Second, during the evolution of transformational grammar, specific decisions to eliminate specific T-rules seemed to have been motivated by occasional insights into the generalities of constituent structure rather than by the consistent application of some criteria provided by the theory of transformations for deciding whether a given configuration would be obtained via P-rules instead of T-rules. In short, a trend already observable in transformational grammar suggests that as knowledge of constituent structure relations improves among transformationalists, all transformations that alter constituent structure may be superseded by specific improvements in the formulation of the base rules. For example, double-base transformations were supplanted by P-rules that introduce S directly into P-markers; T-rules designed to reduce certain clauses to phrases became unnecessary when P-rules were permitted to introduce phrases directly into deep structure, and so on.

Now what this ‘transformationally-adverse’ pattern suggests is somewhat disquieting, since it leads one to suspect that T-rules were and are nothing more than a stopgap solution to a shortfall in the expertise of analysts. A declaration by Zellig Harris, Chomsky’s mentor and the father of transformationalism, seeks to exculpate himself and others in this regard:

Some of the cruces in descriptive linguistics have been due to the search for a constituent analysis in sentence types where this does not existbecause the sentences are transformationally derived from each other.[1] [emphasis added]

Harris was thus alleging that sentence types he could not analyze actually lacked structure, thereby necessitating major theoretical innovation (transformational derivation) to account for them. But, in view of the fact that an ever-expanding corpus of sentences was proving amenable to analysis, one wonders whether this move qualified as science (which he and others falling under the aegis of behaviorism so earnestly sought) or pseudo-science.

It is instructive to consider this question from a broader perspective: Suppose that scientists at large were to systematically apply the same logic to their own difficulties, consigning phenomena beyond the reach of their current paradigms to an arbitrary assortment of entities to be derived via transform from elementary phenomena. The result, of course, would be a T-rule component in every branch of science that has a residue of unknowns. Aside from their utility as a method of indexing ignorance, T-rules accomplish nothing whatsoever by way of legitimate explication, and no amount of scientist lingo or mathumbulation can make it otherwise.

Is this being unfair? I think not. Harris and other linguists influenced by a fragmented mindset declared semantics (meaning) to be out of bounds because it requires personal introspection, a form of observation held by them to be implicitly unscientific. What is out of bounds, of course, is also largely out of focus for the refs and players on the field. The systematic neglect and suppression of semantic considerations that ensued was to blame for the shortfall in constituent analysis, not the lack of constituent relations. The truth be told, had the refs actually heeded their own declarations relative to what is science and what is not, they would never have dabbled in linguistic analysis at all – it’s an introspective, semantically oriented exercise from the get-go in all its dimensions.

Relative to the comparison of the two paradigms in question, in TGG, if a sentence occurs that is not directly analyzable by way of the base rules, the analyst asks: "What structure is this a transform of?" In contrast, in JG, if a sentence occurs that is not analyzable in terms of the existing the J-rule/L-rule combo, the analyst asks: "What structuring have I overlooked in the formulation of my rules?"

At this juncture, I am convinced that, given a comprehensive understanding of natural language structure, a component of base rules capable of generating the entire spectrum of structures observed in the world's languages can be devised, together with coding grammars capable of expressing these in the various output modes needed.

It may be helpful in further characterizing the position of junction theory vis-à-vis transformational theory to review the rationale that was originally set forth by Chomsky in Syntactic Structures and how that rationale was later modified. The original version of TGG was supported by arguments about the relative adequacy of different types of formal grammars for handling natural languages. It was argued that while a phrase structure grammar alone cannot generate the variety of syntactic structures observed among the languages of the world, a phrase structure grammar plus a component of T-rules can. The formal model composed of P-rules and T-rules, however, while capable of mechanically generating an impressive variety of sentences, did so without appeal to semantics. Hence, T-rules were at first allowed to apply without regard for changes of meaning they might occasion.]

Eventually, however, as it became more and more apparent that any interesting model of language must give some account of semantics, T-rules were constrained so that, while they could still transform constituent structure, they could not (presumably) effect any change that altered meaning. Later, when it also became clear that even so-called surface structure syntax was semantically relevant, it was proposed that semantic interpretation of both surface structure and deep structure was necessary.

What transformationalists have been loathe to acknowledge in this regard is that this revision of the model effectively nullified the very premise that had given birth to the theory of transformations, namely, that some surface configurations can’t be analyzed independently because they have no constituent structure of their own. How, pray tell, can a configuration devoid of analyzable structure support semantic interpretation? At this point, like it or not, both the P-rules and the T-rules of TGG were dealing in structural semantics, whereas at its christening, as we have noted, semantics in any form was declared to be extra-scientific.

These developments, of course, are consistent with our theme, namely, that semantics, once declared in bounds, effectively demolished the foundations of TGG. But not to worry, language itself is the ultimate Houdini, able to preserve and propagate illusions ad infinitum. ‘Transformational’ Grammar will still be TGG when the last transform has vanished, just as Darwin’s theory of evolution purportedly remains intact even after recent declarations that nature specializes in quantum leaps rather than baby steps.[2]

The position of Junction Grammar is now, and has always been, that all constituent structure relations are semantically relevant and should be assigned by the same set of rules, namely the junction rules. The composite meaningof sentences is considered to be a function of underlying relations defined by these rules, plus the reference (denotations) of the sememes joined, plus whatever additional implications, connotations, etc. occur as by-products of the information processing that J-trees stimulate in other components of the model.

IV. AN OVERVIEW OF THE MODEL

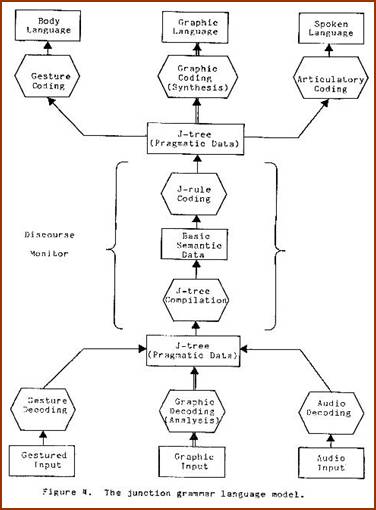

This brings us to an overview of the Junction Grammar model of Language in outline (canonical) form. From the speaker's point of view, as certain needs arise within one’s information system, one formulates semantic junction trees designed to relieve those needs. For example, suppose one senses a lack of certain information which is needed to conclude a given logical computation. Theoretically, this need stimulates the junction rule component to generate a junction tree specifically designed, when received by the hearer, to recover from his information system the required information.

Thus, from the inside out, a need stimulates the generation of a junction tree; this tree is custom-made to satisfy the need in the context of a specific discourse environment. The lexical coding grammar interprets the semantic tree and codes up an articulatory junction tree corresponding to it. The junctions in this tree are then executed by the vocal tract to produce the acoustic signal we refer to as live speech. Concurrently, other coding grammars may also access the semantic tree and code up motor instructions for those parts of the body which participate in body language. Figure 4 depicts the model described.

From the outside in, the acoustic and visual signals are received by a hearer who reverse-codes them to obtain the tree. The junctions in the tree are then executed and, it is presumed, bring about specific upgrades and changes in the information system which is being serviced. While it is beyond the scope of this article to discuss in detail the JG model as it relates to communication, suffice it to say that it is designed to simulate the transfer of information not only between internal components of the linguistic order, but also between participants in discourse.

V. THE THREE BASIC JUNCTION TYPES

In Junction Grammar, a junction is a linguistic operation that joins two or more operands in a specified way. Every junction rule consists of the following elements:

- Categorized constituent operands;

- An operation symbol specifying what is to be done with the operands by the semantic processor (statically, the operation may be viewed as a relation between the operands);

- A designation of the category of the new constituent formed by the junction.

All junctions fall into one of three basic types:

1. If the category of the new constituent formed by the junction is not the same as that of any operand used by the junction, then it is an adjunction.

2. If the category of all the operands and also that of the new constituent is the same, and if no relation of rank results from the junction, then it is a conjunction.

3. If the category of the resulting constituent is the same as that of one of the operands, and if a relation of rank between the operands results from the junction, then it is a subjunction.

Adjunction

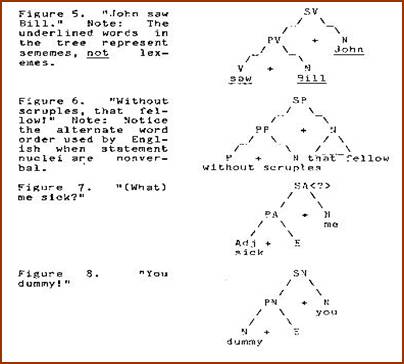

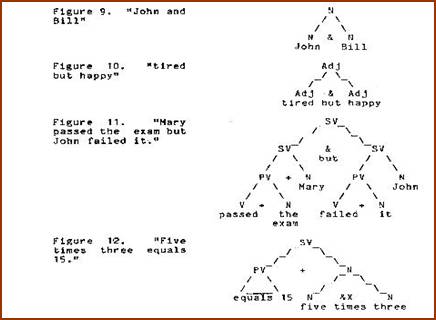

In semantic junction trees, adjunctions are used to form predicates and statements (predications). For example, if a verb is adjoined to a noun, a predicate with a verbal nucleus results (PV). If that predicate is in turn adjoined to another noun, then a statement with a verbal nucleus results (SV). Corresponding predicates and statements are obtained by using NOUN, ADJ, ADV, and PREP as adjunctive nuclei.

Figures 5-8 depict predicates and statements formed via adjunction. Those with subjects other than NOUN always occur as subjoined structures, which we shall explain directly. Those with NOUN subjects can all occur as super-ordinate structures.

Conjunction

Conjunction is used to form coordinate configurations with and, but,or, and perhaps other operations of a mathematical nature (plus, minus, times, etc.) In junction grammar, conjunction is permitted on all categories.

Subjunction

In junction grammar, modifiers of all kinds are subjoined to their heads. Subjunctions are divided into two broad classifications:

- Full subjunctions

- Interjunctions.

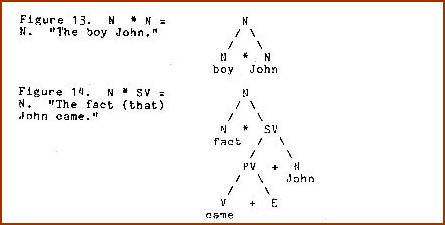

Full subjunctions correspond to complement constructions, i.e. constructions such as "The boy John" where John is the boy in question (Figure 13), or "The fact (that) John came,” where “that John came” is the fact in question (Figure 14).

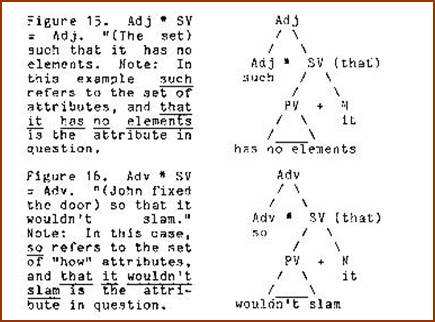

Full subjunctions occur in considerable variety. Parallel to Figure 14, where the clause complements a noun, there are cases where the clause complements an adjective (Figure 15), or an adverb (Figure 16).

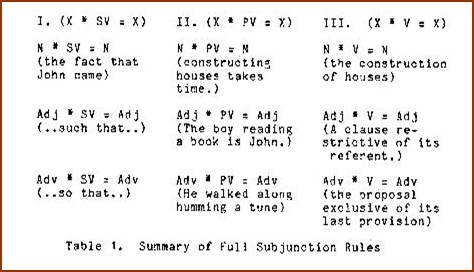

In other cases, the complement is a predicate or a predicator rather than a full statement, so that corresponding to the rules in Column I, Table 1, we also have those in Columns II and III.

To make a long story short, full subjunction corresponds to the rule schema X * Y = Z, which subtends sentential complements, participles in all their variety (both active and passive), action nominals, and other less frequently discussed derived forms. It also includes one other construction type of particular interest which we now consider because it points up an important property of junction rule schemata, namely, their predictive power.

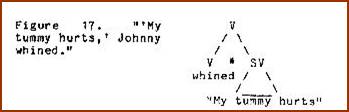

Several years ago we devised a computer program which, given the set of sememe categories used in semantic junction trees, generated the rule schema corresponding to the full subjunction formula X * Y = Z. All of the rules exemplified so far were generated by that program and easily verified by data. However, another rule, V * SV = V was also generated. Since no one could readily associate this junction with available natural language data, we attempted to impose a constraint on the program to exclude it from the schema. This was frustrating, however, because every constraint devised also excluded rules which were valid. Finally it occurred to me that the problem rule (V * SV = V) was as easily verifiable as the others. We had simply been ignorant of the grammatical relation expressed by this rule. The structure in question corresponds to those cases where a statement is an instance of a linguistic act of some kind. For example, in "My tummy hurts,” Johnny whined, my tummy hurts IS the whining referred to by whined(Figure 17).

Many other verbs (e.g., say, know, think, etc.) refer to linguistic performances and therefore use this rule. An interesting variation of this construction occurs when animal noises are reported (e.g. "The cow goes moo, moo"), or even when motor performances are referred to vicariously with linguistic tokens (e.g., "The rabbit went hop, hop").

I cite the case of V * SV = V in order to demonstrate that junction rules represent more than idle speculation. They have theoretical substance. They not only account for observed data, but project beyond it, drawing one's attention to structural configurations not noticed before and predicting possibilities yet to be verified.

So far we have considered examples of full-subjunction. Let us now consider a special kind of subjunction called interjunction.

Interjunction

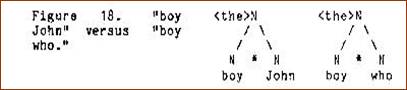

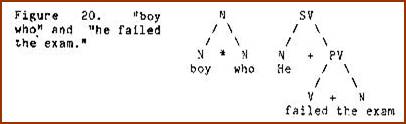

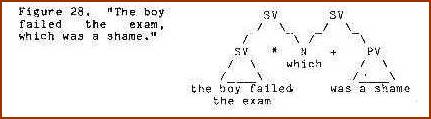

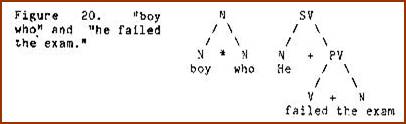

Interjunction corresponds to the structure of relative clauses and phrases. Suppose, for example, that instead of saying "the boy John", where a name is available to identify the boy in question, we must make the identification by using a relative clause ("The boy who failed the exam..."). In this case, the relative pronoun who would appear in the same position as John in the full-subjunction (Figure 18):

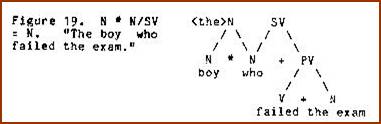

However, who, in addition to being subjoined to boy, is also adjoined as subject to the predicate failed the exam. Thus, who functions in a dual grammatical role. This is shown by simultaneously adjoining who to the predicate of which it is the subject (Figure 19):

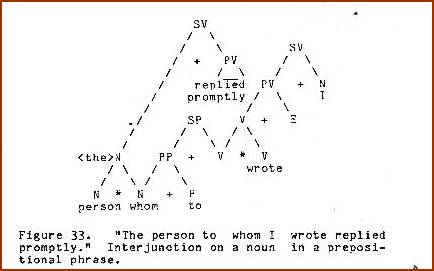

The junction rule for this structure is N * N/SV = N (N subjoin N of SV yields N). Interjunctions may be viewed as intersecting structures, i.e. structures which overlap each other at some point where there is co-reference. In the example cited above, the particular boy in question is the same person who failed the exam (Figure 20):

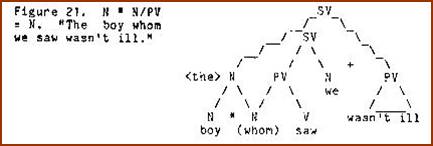

Interjunctions are as prolific in their variety as full subjunctions. Let us consider some more examples. In Figure 21, the relative clause intersects on the direct object of its verb, thus yielding whom rather thanwho at the intersect.

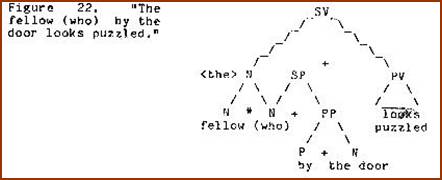

Statements whose nuclei are not V are also interjoined. The prepositional phrase modifying N is depicted in Figure 22.

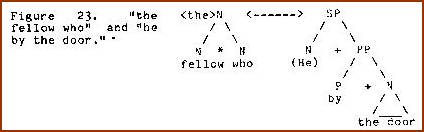

Notice that the intersect node in Figure 22 is glossed with who. This constitutes a claim that phrasal modifiers are lexical manifestations of semantic structure parallel to that of clauses. The underlying interjunction is between the head and a prepositional statement whose subject has the same identity as the fellow in question. The co-reference of who and he is depicted in Figure 23. The sense of restriction that one feels from the modification stems from the subjunction of a sememe referring to an individual (who) to a class (fellow).

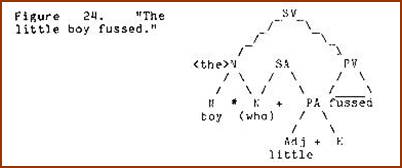

Adjective modifiers are represented in the same way (Figure 24):

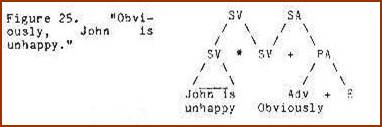

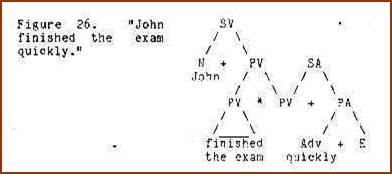

Adverbial modifiers have the same basic structure but are interjoined to constituents which are not nouns. For example, an adverb modifying an entire statement would be given as an adverbial statement interjoined to the statement (Figure 25). No word is realized for the intersect node of these and other similar structures, but must be present in the tree to account for their semantic effect:

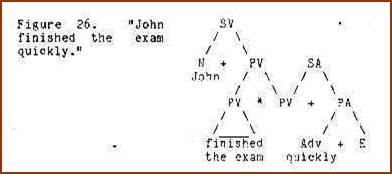

A manner adverb modifying a predicate would he given as an adverbial statement interjoined to the predicate (Figure 26):

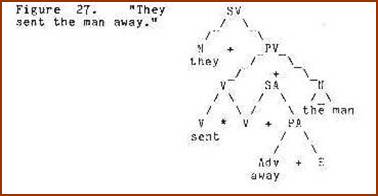

An adverb specifying directionality on a verb would be given as an adverbial statement interjoined to the verb:

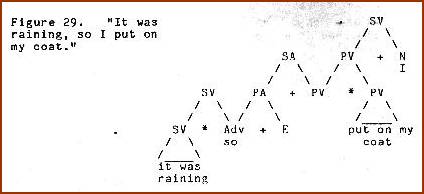

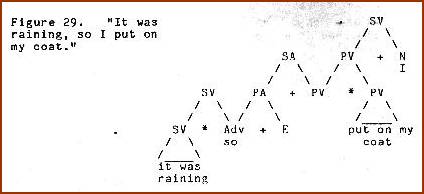

Interjunction, of course, is also a schema: X * Y/D = Z. Among the rules predicted by the formula are SV * N/SV = SV and SV * Adv/PA = SV. These correspond to sentence relative constructions:

Comparatives entail interjunction between quantificational constructions.

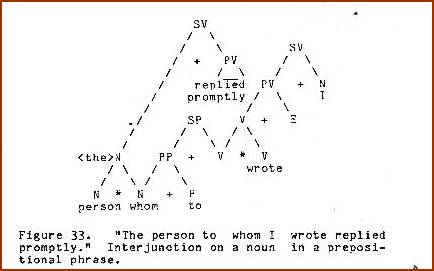

Other interesting examples of interjunction are:

- Interjunction on adverbs of manner,

- Interjunctions on adjectives, and

- Interjunction with a noun in a prepositional phrase.

See Figures 31-33, respectively.

In addition to the contrast between full-subjunction and interjunction, other distinctions are introduced on the basis of the relative referential scope of the operands being subjoined. Thus, if a modifier is restrictive in the usual sense, one operand has a smaller scope than the other. If both operands have the same referential scope, then a nonrestrictive relation exists.

A discussion of other distinctions is not possible here, but is available in the literature on junction grammar. The material presented in this paper is intended solely as an introduction to the basic concepts of JG.

[1]Harris, Zellig S., “Co-occurrence and Transformation in Linguistic Structure,” Language, Vol. 33, p. 338, 1957.

[2]Darwin’s founding thesis that nature ‘no face saltos’ (doesn’t make jumps) is effectively demolished by more recent proposals to the effect that ‘punctuated equilibrium’ is the true state of affairs.

|